|

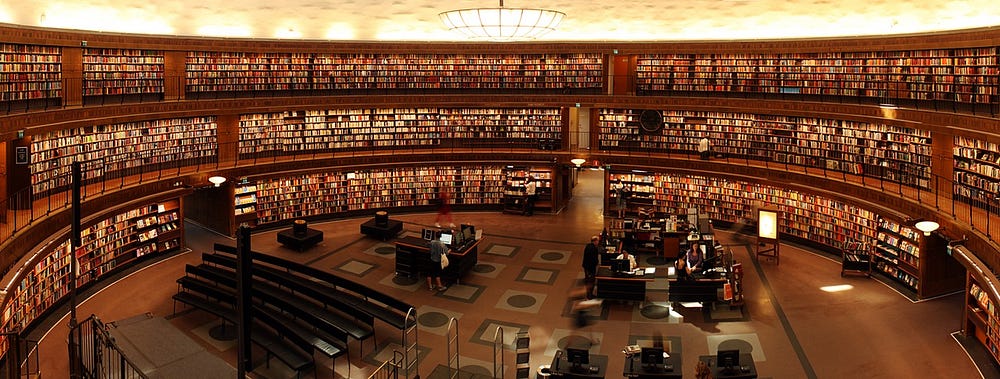

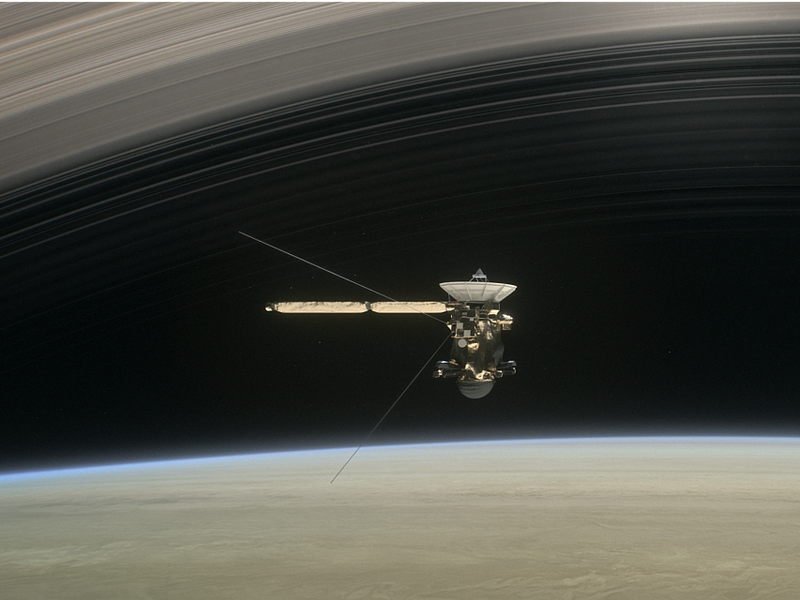

| An illustration of Cassini’s “Grand Finale” at Saturn (NASA/JPL) |

Semper Exploro.

Always exploring. The spirit of this motto filled my heart as I watched

the planned disintegration of the 20 year old Saturn orbiter Cassini

unfold on social media. When the spacecraft finally stopped signalling

the homeworld at 1155 UTC on September 15th, I realised that, although

the mission was a resounding success, the spirit of Semper Exploro

demands that we should send another probe in its place. After all,

there is so much left undiscovered at the Saturnian system; what lies

under the icy crust of the geyser-spewing moon Enceladus? What do the

seas of Titan actually look like? Cassini

has left us with even more questions than before it entered orbit in

2004 after a 7 year journey across the solar system. And those questions

should be answered not merely because it is scientifically relevant (it

is a given), but because the spirit of exploration which Cassini embodied and which Semper Exploro

captures so well has the potential to further unite humanity and bring

about the best of us in more ways than what any formal ideological

framework can do. And we need a better alternative today, now more than

ever.

Exploring

can be a risky venture, but its a worthy risk. Indeed, an explorer’s

death may be the only kind of death worthy of glorification. We stand

today only because of a few men and women who risked it all stepping

into the unknown in all kinds of fields. With exploration comes

advancement. With curiosity comes the gifts of innovation. With ventures

comes prosperity. With each expedition into the unknown comes priceless

knowledge that uplifts us all as a species. How much more prosperous

would we be today had we dedicated all our efforts to kill or dominate

one another towards instead settling space, curing illnesses and so

forth? The most logical answer would be: many times over, perhaps a

thousand fold.

Film works like Star Trek

that attempt to portray the future as an advanced utopia are sometimes

criticised of being overoptimistic, naive and ignorant of ‘reality on

the ground’. But reality is only what we allow it to be. If we want, we

could have a Star Trek kind of

future right now. If we want, we could have institutions whose only

purpose is to explore, discover and advance peaceful prosperity across

the stars. Yes, even with our severely flawed humanity we can still have

our cake and eat it IF we believe we can. After all, this flawed

humanity is the same humanity that has created the better present we see

today. And experience can serve as a catalyst; people today are more

motivated than ever to improve not just their own circumstances, but the

circumstances of everyone else around them, simply because of inspired

hope. The momentum created by this hopeful belief means that, for the

most part, we have no where else to go but up.

We cannot hope to have a smooth ride to the future. But fantastic endeavours like the Cassini

mission can help remind and solidify our global desire as a species: to

see what’s on the other side of the distant horizon. To learn and grow

wiser. And to do it with everyone around us.

If we can just keep on exploring, perhaps one day we might discover an even better version of ourselves than we could ever have imagined. But we must keep exploring. Semper exploro forever Cassini.